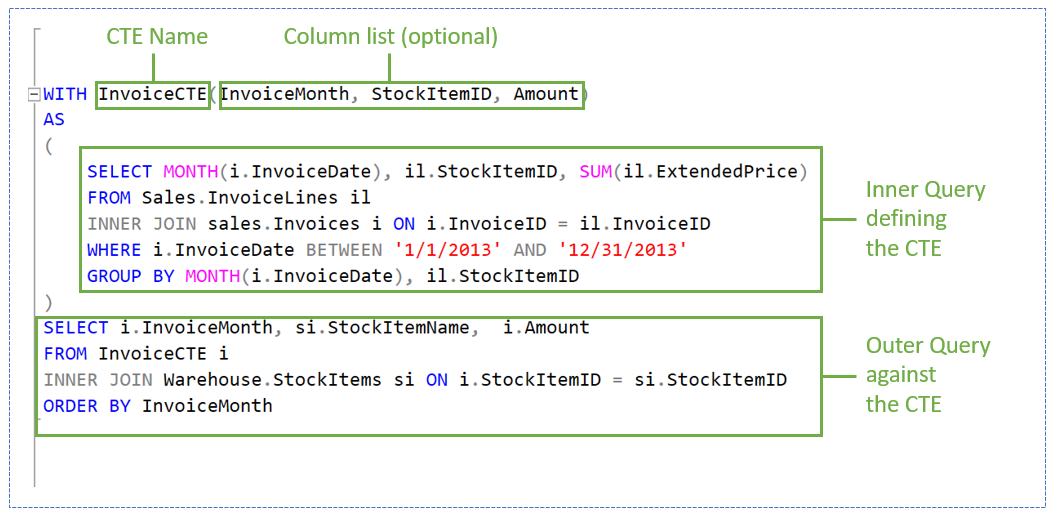

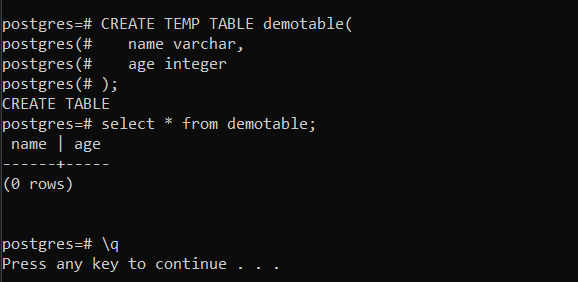

I found that if I compared that with yours I’d seeĬPU time = 1062 ms, elapsed time = 299 ms. our Bad™) I see cases where we’re creating temp tables with ID as Primary Key with Identity! Here’s my test proc:ĬREATE TABLE #MyUsers2 (Id INT IDENTITY (1, 1) Primary key, DisplayName NVARCHAR(40)) ĮXEC dbo.usp_TempTable_Heap2 N’Brent Ozar’ ĬPU time = 983 ms, elapsed time = 364 ms. But it was a good one because I found an tuning opportunity because of this post. Well, I’ve just emerged from a rabbit hole this post sent me down. As an aside, I wonder if some of the sequential temptable1-temptable2-temptable3 type coding is more a result of the developer trying to wrap their head around the problem logically, and that’s just how they work through it…even if it is suboptimal in performance (which likely they aren’t even thinking about at that point). But again, here we are in violent agreement as you acknowledge either might be superior, depending on the situation…and I agree with the principal of trying to let the Optimizer have the most freedom to do its thing (see also query hints) as a default, before trying to force its hand…it is usually better at it than us (until eventually it isn’t). I’ve had good results moving things in either direction…depending on the query and the problems involved. So there’s a balance to be struck with either artificially breaking apart a query into steps (not trusting the Optimizer and forcing the logic into separate stages) and collapsing it into one logical statement and trusting the Optimizer to do its thing. I’ve also seen instances where we’ve had to break apart a monstrosity of a single statement (hundreds/thousands of lines with CTEs and subqueries) into more atomic steps with temp tables because the Optimizer just didn’t do great picking a “good enough” plan given the complexity. Lipstick on a pig, maybe, but sometimes the index ends up improving things (when a major refactor isn’t practical). Although sometimes we might have a large many-step proc with loads of temp tables…suboptimal design usually, but occasionally improved with careful indexing. I guess it depends how much control you have on the codebase…if your temp table is literally just going to be scanned and joined to another table, then yeah, indexes are useless overhead. SQL Server will reorder operations – it doesn’t have to execute the first CTE first, then the second CTE second, and so forth.Īs is often the case with your advice, you deftly bait me into wanting to argue specific exceptions (never shrink a db! index frag doesn’t matter! and other greatest hits) when I actually agree with the principal, haha. I am by no means saying that CTEs are always better than temp tables – often it’s the reverse – but if you’re hitting a performance tuning wall on queries that use temp tables, try converting them to CTEs. If you have a multi-step process that involves a lot of filtering and joining, let SQL Server recalculate where the filtering logic should happen.

If you’re only going to access the data once, leave it as a heap. Indexes make the most sense when the temp table is going to be reused repeatedly across lots of statements that all do filtering or joining or sorting using the same keys. When loading temp tables – or any objects, really – be ruthless about filtering as early as possible. Only load them with the data you actually need. Now we’re going to have a CPU problem if a bunch of these queries run simultaneously.Īnd it’s still not as fast as our original solution, the heap. Note that CPU time is higher than elapsed time – that’s your clue that the query went parallel across more CPU cores. A big part of the reason why it’s faster is that now the query is going parallel.

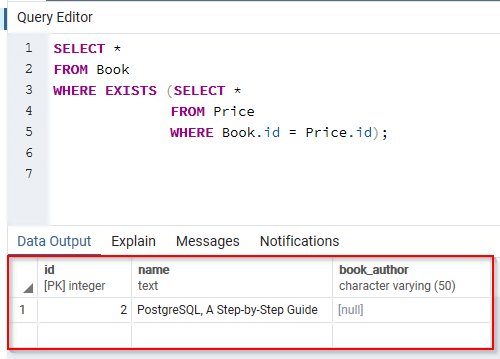

Įxecution time dropped from 57 seconds down to 18 seconds, but there’s a catch. Here we go:ĬPU time = 42107 ms, elapsed time = 18145 ms. It can’t be a unique index because multiple users share the same DisplayName, but that’s okay. Instead of a heap plus a nonclustered index on DisplayName, we can just define a single structure for the temp table: a clustered index on DisplayName. We can reduce the overhead of the process by only having one structure to store the data. We can’t do them both in parallel at the same time because the nonclustered index has to be able to point back to a specific row in the heap – and to do that, we need its physical location, like I talk about in How to Think Like the Engine. Part of the problem is that our heap (table) has to be loaded first, and then the data has to be sorted by DisplayName, and then an index has to be created on DisplayName. Sure, the second statement drops from 1,017 milliseconds to 75, but…who cares?!?! The additional overhead of building the index is much, much higher, making the query take ten times longer overall. CPU time = 56594 ms, elapsed time = 56850 ms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed